CML Research Programme

Research programme at a glance

The long-term goal of our research aims at developing a rigorous, theoretical framework describing the neural, cognitive, and computational mechanisms of crossmodal learning. To this end, we focus on three primary sub-goals of the research programme:

- to enrich our current understanding of the multisensory processes underlying the human mind and brain,

- to build detailed formal models that describe crossmodal learning in both humans and machines,

- to improve the performance of artificial systems (and humans) by exploiting this enriched understanding and applying these new models to tasks requiring a human-like conception of the world.

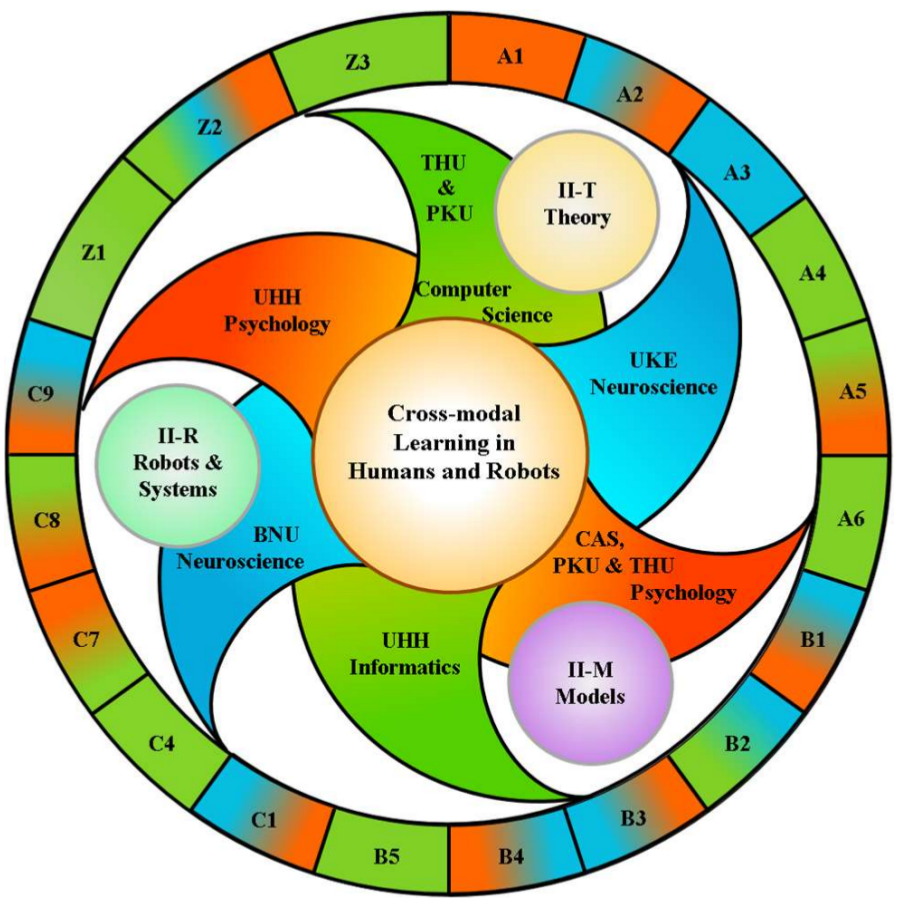

In the second funding period (2020-2023), the TRR comprises sixteen separate scientific research projects (A1..C9) complemented by three service projects (Z1..Z3) that work together towards achieving these objectives.

Each project examines crossmodal learning between at least two of the different sensory modalities: natural ones, like those that carry visual, auditory, somatosensory, tactile, haptic, proprioceptive information, and artificial ones with information sources such as sonar, range finders, RGBD, point clouds, brain signals, etc. Human sensory modalities can also be enhanced by artificial mechanisms, such as computer interfaces and brain-computer interfaces. Finally, language can also be considered a natural (but not a sensory) modality, used to directly communicate (abstract) concepts. Several aspects of spoken and written language are also the focus of CML subprojects.

The goals of the TRR require a highly interdisciplinary approach, which will be supported by expertise from a broad range of disciplines, applying a broad range of tools and methodologies. In fact, each project uses at least one of the following research methods, which help to define the research, as they provide the foundation and framework for each approach. These methods include: neuroimaging methods such as functional magnetic resonance imaging (fMRI) for estimating neural activity in the brain by measuring blood flow, a technique that has high spatial resolution but low temporal resolution; magnetoencephalography (MEG) and electroencephalography (EEG), which measure neural activity with greater temporal resolution but lower spatial resolution; event-related potentials (ERP), which are EEGs averaged over many trials to eliminate background noise; electrocorticography (ECoG), in which electrodes are placed onto the surface of the brain; non-invasive brain stimulation (NIBS) methods such as transcranial magnetic stimulation (TMS) and transcranial current stimulation (tD/ACS), which are used to affect modulate brain activity; behavioural studies, which can, for example, track eye movements, reaction times, and/or other observable results of human activity; models and methods for computational analysis, such as Bayesian, statistical, graphical, and symbolic models as well as supervised, unsupervised, and reinforcement-based learning methods; neural models such as recurrent neural networks, deep learning networks, self-organizing maps, oscillator models, etc.; and integrated systems or agents, which combine multiple computational methods to produce complex behaviours. Overall, the TRR deploys a comprehensive inter-disciplinary approach, which comprises essentially all relevant state-of-the art methodology for the study of crossmodal learning in natural and artificial systems.

In the first phase of TRR 169, the research programme was organized into three thematic areas, namely (A) learning dynamics and adaptivity, (B) generalization and prediction, and (C) human interaction. Each project then worked towards at least one of the original six objectives; and each project also took part in one or more of three integration initiatives, namely II-T, which focused on the theory of crossmodal learning; II-M, which focused on building models of crossmodal learning; and II-R, which focused on demonstrating the centre’s crossmodal learning results on our robotics platforms.

The thematic areas and the integration initiatives have been very successful—both to structure and to link our research—and they will be kept for phase 2 of the TRR. The original six project objectives, however, have been updated and replaced with a set of six more specific objectives which will be acting as binding themes to encourage closer cooperation and exchange of ideas and concepts between multiple subprojects.

Six binding themes — updated objectives

Significant progress in several areas and in particular in deep-learning encourages us to pursue a more integrated set of objectives as binding themes for the second phase of the TRR. Adherence to these themes will allow us to easily evaluate and compare results and also work towards applications while still continuing with basic research. Actual applications are later planned for the third phase of the TRR.

- O1: Learning architectures. Just four years ago, even the leading deep learning (DL) architectures were still quite simple and straightforward. Today, much more complex DL architectures are proposed, often combining several sub-networks with specific purposes: pre-trained convolutional networks support vision and natural language processing tasks, residual layers allow for very deep structures, encoder-decoder pairs extract useful representations from unlabelled data, and recursive layers provide memory and remember context (over different time-scales), while attention models learn to identify the relevant information in the context. These architectures, however, are mostly still designed by hand, and only after lengthy training, it will, in turn, become clear whether a particular design decision was successful. This makes it very interesting to compare such DL architectures to brain models observed in neuroscience towards similarities and differences of crossmodal processing, e.g., regarding early and late fusion architectures, laminar integration of sensory data in the cortex, role of dynamic functional connectivity, selection types of learning rules, etc.

-

O2: Learning strategies. Standard machine learning methods are designed to minimize the (expected) risk of a cost function, typically a simple sum or expectation value of individual prediction errors. This works well in many cases but also results in sub-optimal behaviour if the loss function is not chosen properly. The TRR169 project will study how to make use of large prediction errors (for one-shot learning) for handling complex multimodal data; explore hierarchical Bayesian inference/models for sensor fusion; use model averaging; and try to identify causes of human error-making during crossmodal learning.

- O3: Robustness. Deep-learning methods currently reach or even surpass human-level performance on specific (unimodal) perception tasks, but the same networks still lack robustness, with surprising failure cases even slightly outside the trained data points. While general mathematical results on the robustness of trained DL networks are not to be expected soon, the project TRR169 hypothesises that crossmodal processing will enhance the robustness of deep networks. The project TRR169 will also study multisensory topologies and network architectures in general. Regarding our robot demonstrators, we target robustness in sensorimotor behaviour in different and varying task contexts. Robustness is also a key topic in the studies on neural systems, both with respect to learning in the healthy brain, and the impairment of crossmodal processing in patients.

- O4: Anticipation and prediction. At the moment, biological systems are still much stronger than AI systems regarding anticipation and predictions of events in their environments. The project will study mechanisms of sequence interpretation to link previous event sequences with future sequence anticipation and prediction in an incremental manner. Crossmodal mechanisms are expected to be essential to achieve this goal. Linking studies in the humans and in artificial systems, we will explore whether biological mechanisms for crossmodal prediction can inspire the implementation of control architectures in artificial systems.

- O5: Generalization and transfer. A challenge is how knowledge-based or knowledge-guided strategies can be applied with crossmodal learning simultaneously. For this, it is necessary to identify what kind of transfer strategies in different learning tasks can be used in different scenarios. A further issue is whether transfer only occurs for explicit learning or for implicit learning processes. The project will study findings of human learning generalization and transfer for devising computational multisensory skill learning approaches.

- O6: Benchmarking. Most recent scientific studies involve special equipment, datasets, and software, and cannot easily be repeated by others. Even when datasets are available, results are often given only as averages (e.g. classification accuracy over the full dataset), and interesting failure cases are only discussed briefly, if at all. Therefore, the project will work on novel benchmarking approaches to ensure that the research results can be verified and applied by others. We will consider reliable benchmarking as a means towards new insights, to document and understand failures cases, and to exploit errors for crossmodal learning. The project is also committed to providing open access to multimodal datasets, network architectures, hyperparameters, and software. Benchmarking will also involve comparisons of performance between humans and artificial systems.

The three thematic areas of the TRR

The projects of the TRR fall into three thematic areas: A) dynamics of crossmodal adaptation, B) efficient crossmodal generalization and prediction, and C) crossmodal learning in human-machine interaction. Each of these represents a key aspect of crossmodal learning, serving as a common rubric for the closely related projects within each area.